Early man did a lot of things by hand, but soon started inventing

tools (

Simple machines in physics) to help him do things quicker and easier. Modern tools are way too complex than these early predecessors.

Tools of Trade1In the modern world, we depend on tools so much, we don't even realize. See this little

Physics activity page to see how many you may be using. Without tools, our lifestyles as we know will cease to exist. Every trade has associated tools, which we will call Tools of trade (In legalese it has a more restrictive meaning. See note 1). When we call a plumber, we expect him to come with the tools necessary, like the wrench etc. The electricians use different set of tools of which,

voltage tester may be the most important. If they don't have the right tools, we know what will happen. At the very least, you will be looking up Yellow Pages to call the next technician.

Software Tool KitIT field borrows a lot of terminologies from other fields. We are engineers and technicians and architects that "build" software products, just that you won't be able to see the finished products except on your computer screen. While building, running, testing software products, we use a lot of tools. Like the product they help to build, these tools are software themselves. We use Compilers, Editors, Linkers, Runtime etc. to build and execute our software. But, unlike a plumber going to

Home Depot to get his tools, we could simply download it. At the same time, while a plumber's tool chest may always look alike, our tool chest varies depending on the field and the software we build. And unlike a plumber or an electrician, we have the luxury of building our own tools, more so now in the age of Open Source.

Tools I useAs a consultant, I am constantly exposed to different tools at different sites and for some tasks, I tend to keep my own time tested tools. Here are some Open Source tools, I currently use. Often times, I use more than one tool for a task and combine the results. These are mostly for Windows environment. I will share other tools and for Linux separately.

Windows Grep I use it to search for text in files. Same like what Notepad++ or Textpad would search, but has a little more flexibility like the grep.

Many years ago, I used Examdiff extensively. Lately,

WinMerge is the diff tool I use most frequently. WinMerge helps me compare at directory level and helps to merge files quickly. There are some limitations, but I love it. I also use K-Diff3 and CSDiff as each of these offer something unique. These are all free software. Another great diff tool I found was Beyond Compare. My next software purchase would be this. The above tools don't provide a good report for directory compare (Beyond Compare may). To do this, I found

diffUtils and a

nice script I found on the internet, to format the output in HTML.

Greenshot This is the open source screen capture tool I use. Pikpik is a great tool as well. Again, each of these have some feature that others lack.

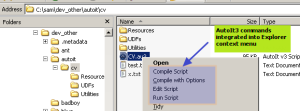

OpenCommandPromptHere There are several names like Command Prompt Here, SendtoToys etc. Essentially, creates a context sensitive menu in Windows Explorer, so you can open command prompt from where ever you are in Explorer. (you don't have to CD into any directory manually).

BareTail I recommended this earlier; I use this to tail Jaguar.log etc. It's really good. You can highlight lines with different phrases.

YEd This I use a lot to do diagramming. It's more a graph editor than a Graphics editor. Works for me. While researching on diagramming, I found UMLet to be interesting. Dot, Dotty and GraphViz help me with automatically generating some flow diagrams.

Apart from these I use Ant, Eclipse, JMeter, Xampp etc as part of the my development environment. I also use Libre Office (Open Office's successor) a lot.

Tools I buildI am always looking for ways to make the computer do the work for me, instead of doing things manually. I feel, if a task has to be done more than once, it deserves a script or a tool. When the tools available are not good enough for a particular task, I try to build some on my own as well. But this is also an excellent opportunity to learn new techniques and keep your skills sharp. I do these during down times and/or as part of the main task.

I am sharing some of my tool building experiences here, hoping it would benefit someone else. If you are a Software developer and somewhat less motivated with the stuff you are working on, give tool building a try next time. The software will benefit from it too. All you need is some imagination and some laziness to resist doing anything manually! Gone are the days, when you had to purchase special software to build such tools. You will be amazed to see how much programming capabilities a standard computer can offer you. For e.g., if you have Word or Excel, you can program build powerful macros (VBA programs). Apart from these, you always have Open Source scripting languages like PERL, Python, PHP etc.

If you recognize early enough, the tools you build could become integral part of a project as well. At Velos, while working on the task of upgrading the database (Sybase SQL Anywhere) for a newer specification, I decided convert the specification into an Access Database. Once the new Spec. was in a database, I was able to compare existing database elements with the new ones and voilà!, the database upgrade script could be generated right out of the database itself. They had a Powerbuilder application to design and save screens (datawindows) in database (blob columns). After going through problems with the blob, decided to write a tool to view, edit, correct screens outside of the application. Few more times of using this, I ended up in writing a simple scripting language to perform these same tasks in a batch mode. Necessity is the mother of invention! Several of these tools were actually used by the support person to identify and fix issues at customer sites.

Some of the casual tools I build for one time use, end up as permanent additions to the team's tool chest. At Capital group, one developer was collecting batch job stats on a daily basis, and when he went on vacation, he gave a page long instructions on how to get these details in Excel. The task was, to go to the web pages (someone with tool mentality, pulled the stats from

Autosys into the page, using CGI-PERL), copy the job names to a spreadsheet and make adjustments to be presentable. When my turn came, I got tired of copying, pasting and correcting pretty quickly. Before the next time, I added a "

Web Query" in Excel to pull up the web pages directly into the spreadsheet and wrote some VBA macros to clean up and present the spreadsheets. Eventually, it became full-fledged product in it's own right, everyone in the team started using it. Page long instructions gone. (Of course, when I left, I left a few pages long document about the macro, but that's another story).

Similarly, in the same company there was another task, developers and/or the analysts had to do. Every now and then company received fund codes from outside (at the time I didn't know much, these are the

GICS codes we received from

MSCI), that had to be reconciled against codes that existed in the system already. Some codes may be replaced with new ones, some may get new descriptions, some may be discontinued. So, they would enter these "changes" in a spreadsheet or word document and then ask a developer to convert these to SQL (INSERT/UPDATE/DELETE) statements to be put into production. Enter yours truly, the lazy user. I wrote some excel macros that would import the data from the Oracle table (using ODBC), reconcile and generate the SQLs for the developer to run in production. Yes, it did take a little more time to develop and test it, but next time similar request came, it was a breeze.

An Excel macro dubbed "Query Tool", I developed at Capital Group tops it all. It again started as a manual task. Goal was to identify some codes (for e.g., security codes) from another system and see if it existed in our database. This was done manually too. User would extract data from the table into a spreadsheet and look up each code. Sometimes, codes might have been mistyped, so they have to match partially or look at other attributes to identify and correct. At the end of this, they may have gone through a spreadsheet with 1000's of codes in several passes, identifying codes from another list they had. Time consuming, laborious and error prone. If this is not what the computer does best, what else? So, I started coding the excel macros to do the repeated search of the codes they entered. Little by little, it became a full fledged querying tool (using Database Query - through ODBC - in Excel). The tab containing the actual SQLs helped customized the lists further. What resulted was a nice product. The users loved it.

These one-off solutions may also help you save a project. At

FHLB, we were working on a data extraction project. This was part of the data reporting requirements of the bank set by

FHFB. I created an Access Database as a prototype to a much bigger data-warehouse built in Oracle by a team of ETL developers. I kept this as a tool to validate the data/report from the ETL tools, before we sent the data to

FHFB. All was going well, but suddenly one day, we found a flaw in the Data-warehouse design and the multi-million dollar project simply collapsed. Luckily my boss saw this risk early, supported my efforts to spruce up my Access Database. Believe it or not, my little tool eventually became the official "data reporting tool" for the company, at least the first year.

Journey Continues..Recently, one developer mentioned about how to check a file in Textpad for a record with odd length. This was the idea behind this

PERL script. Another opportunity for such a script was where a batch program creates a large file (XML in this case) that had to be split into smaller chunks. Perfect candidate again for a PERL or a Python script. I did a quick prototype, but it died down for lack of interest. I hear they do it with some tools like access DB and a lot of manual "pulling the hair". And then there are tasks like parsing logs and so forth. A well written script can analyze and report stats from these files, in a jiffy.

The other day, we were discussing testing for a Database upgrade. I brought up the topic of monitoring and my boss said, "you can make yet another tool for it"! I know he was kidding, but I probably will or if I am in luck, I may be able to just download something from the internet. The journey continues!

1. Tools of Trade has a different meaning in legalese. It's the tools your livelihood depends on, so in case of bankruptcy, they will not be taken away by creditors.

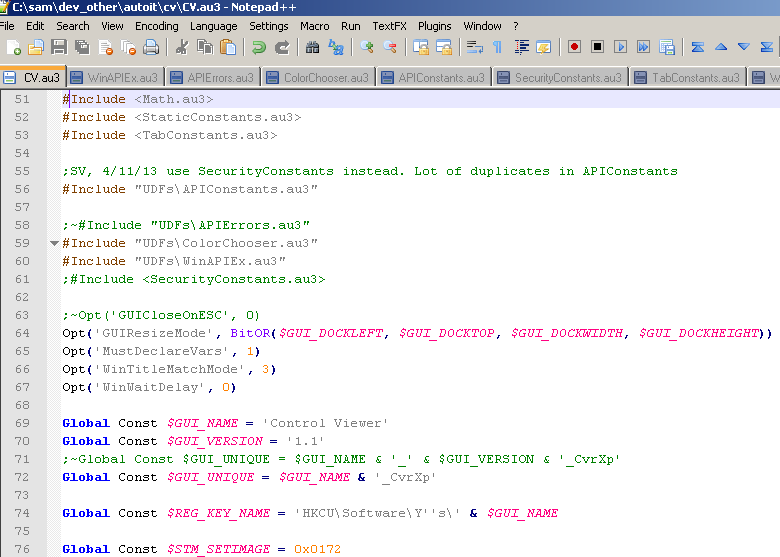

Fig 3 AutoIt3 Script edited in Notepadd++

Fig 3 AutoIt3 Script edited in Notepadd++

Last week, my Windows PC stopped working. (I have to look into it later). At the moment, I am working with my taxes and I needed some files from the old machine, desperately. Luckily, I've been backing up. For this, I use

Last week, my Windows PC stopped working. (I have to look into it later). At the moment, I am working with my taxes and I needed some files from the old machine, desperately. Luckily, I've been backing up. For this, I use